Octree Transformer: Autoregressive 3D Shape Generation on Hierarchically Structured Sequences

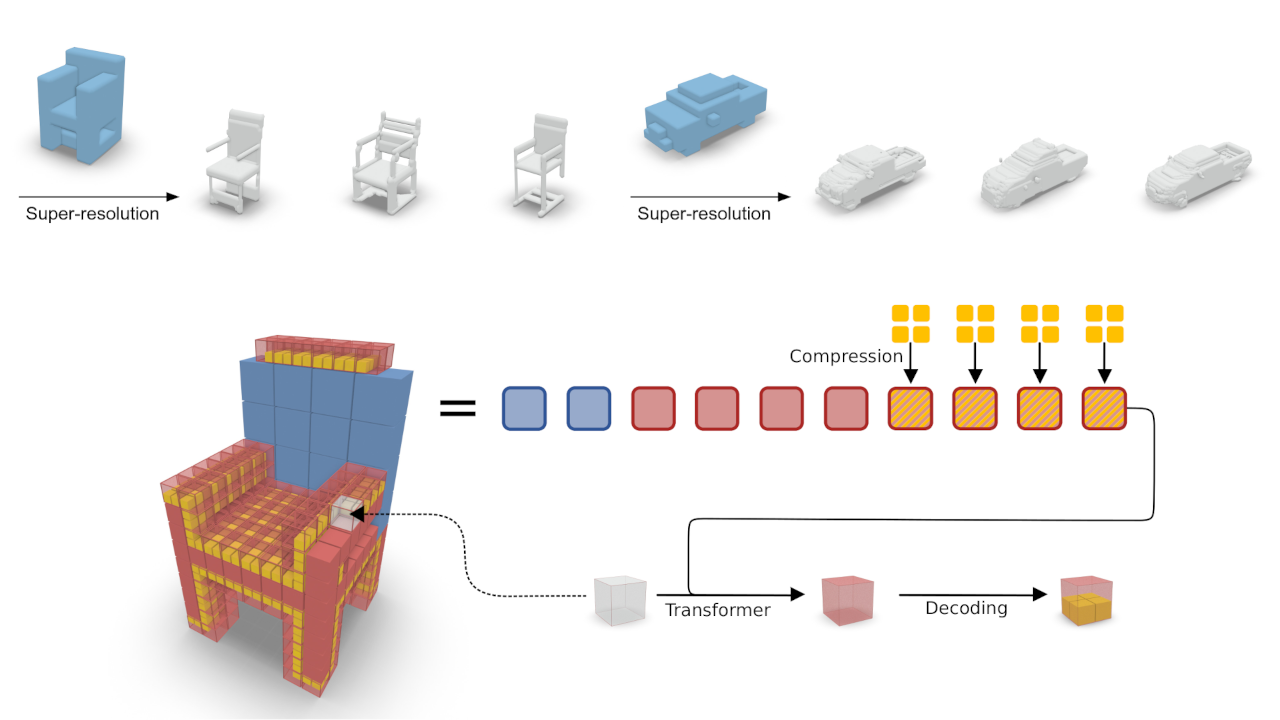

Autoregressive models have proven to be very powerful in NLP text generation tasks and lately have gained pop ularity for image generation as well. However, they have seen limited use for the synthesis of 3D shapes so far. This is mainly due to the lack of a straightforward way to linearize 3D data as well as to scaling problems with the length of the resulting sequences when describing complex shapes. In this work we address both of these problems. We use octrees as a compact hierarchical shape representation that can be sequentialized by traversal ordering. Moreover, we introduce an adaptive compression scheme, that significantly reduces sequence lengths and thus enables their effective generation with a transformer, while still allowing fully autoregressive sampling and parallel training. We demonstrate the performance of our model by performing superresolution and comparing against the state-of-the-art in shape generation.

@inproceedings{ibing_octree,

author = {Moritz Ibing and

Gregor Kobsik and

Leif Kobbelt},

title = {Octree Transformer: Autoregressive 3D Shape Generation on Hierarchically Structured Sequences},

booktitle = {{IEEE/CVF} Conference on Computer Vision and Pattern Recognition Workshops,

{CVPR} Workshops 2023},

publisher = {{IEEE}},

year = {2023},

}